NAB Show Isn’t Just a Trade Show. It’s Where the Media Industry Comes to Find Solutions to Its Real Problems.

Every April right when spring settles over Las Vegas, the people who actually build the media industry – editors, broadcast engineers, streaming architects, ad tech leads show up at the NAB Show. Their goal isn’t vague. They’re there to spot real problems in how media gets made. The show helps them to figure out what’s actually broken and who’s fixing it.

The latest 2026 edition, running from April 18–22 at the Las Vegas Convention Center has an unmistakable theme running through it: AI isn’t experimental anymore. It’s operational. Sessions for this year feature real deployments from Microsoft, Google Cloud, and BBC Studios – not just demos, but real-world impact.

A second AI Innovation Pavilion appears at NAB Show 2026 – a sign of how quickly the conversation has shifted. Instead of asking what AI means, people are now asking where to start using it. More importantly, the focus is moving from experimental AI to scalable, production-grade deployments that deliver measurable ROI. The new space on the floor reflects that change.

We’re also here for exactly that conversation. Gyrus AI takes space at Booth W2300K inside the AI Innovation Pavilion, showing off a pair of tools built sharp for real problems today’s media teams face daily. One speeds up how quickly clips get found, while the other slips ads into view so smoothly they don’t yank attention away.

Semantic Media Search – Because “Search by Tag” Was Always a Lie:

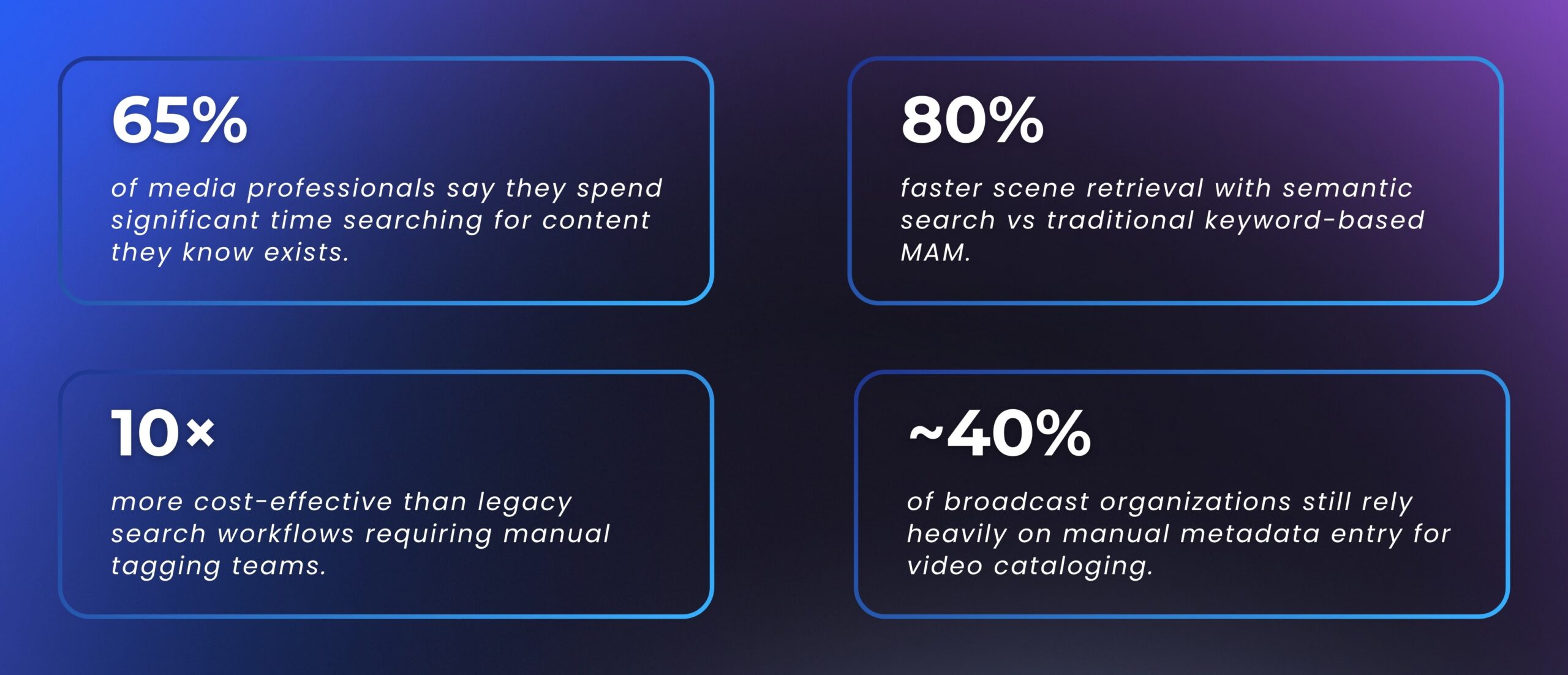

Here’s the real situation in most media organizations today:

The problem isn’t just storage. It’s retrieval. And retrieval has always been broken because traditional media asset management search systems were built around keywords and manual metadata, both of which require human effort to be accurate, and humans aren’t consistent.

Manual tagging becomes impractical and expensive at large scales. Humans make mistakes and miss relevant details.

What Makes It Actually Different?

This isn’t keyword search with better synonyms. It’s a different architecture altogether:

Text Queries | Image Queries | Audio Understanding | No Manual Tagging | No Pre-existing Metadata | Knowledge Graph Powered | Domain-Trained AI

Who This Is Built For:

- News broadcasters with decade-long archives that are technically searchable but practically useless.

- Post-production editors who waste billable hours hunting for clips they’ve seen before.

- Sports networks managing thousands of match hours that need frame-level retrieval.

- Streaming platforms trying to surface and reuse catalogue content efficiently.

- MAM platform vendors who want to layer AI intelligence onto existing infrastructure via API.

Virtual Product Placement – The Ad That Doesn’t Feel Like One.

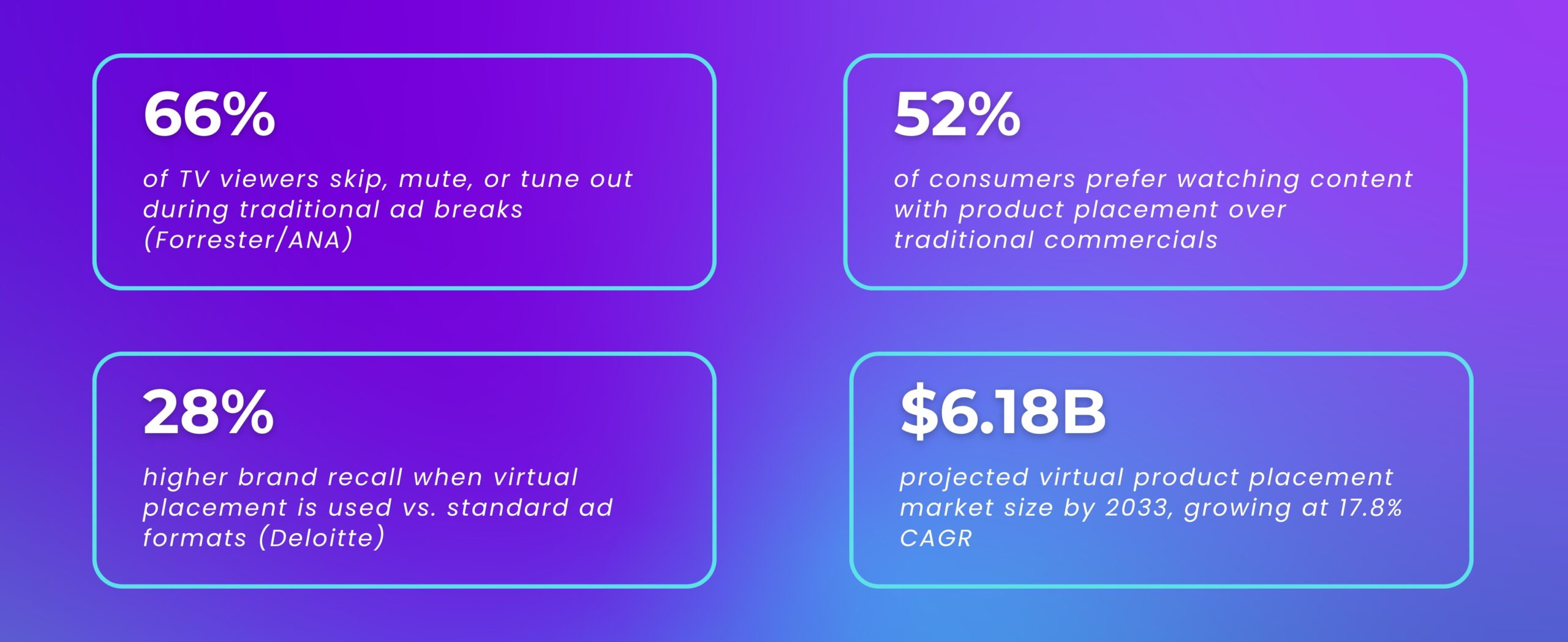

Here’s a number that should make every advertiser uncomfortable:

The audience is ahead of the industry on this. They don’t hate advertising – they hate interruption. Virtual product placement is the structural answer to that problem: brands appear inside the content, not between it.

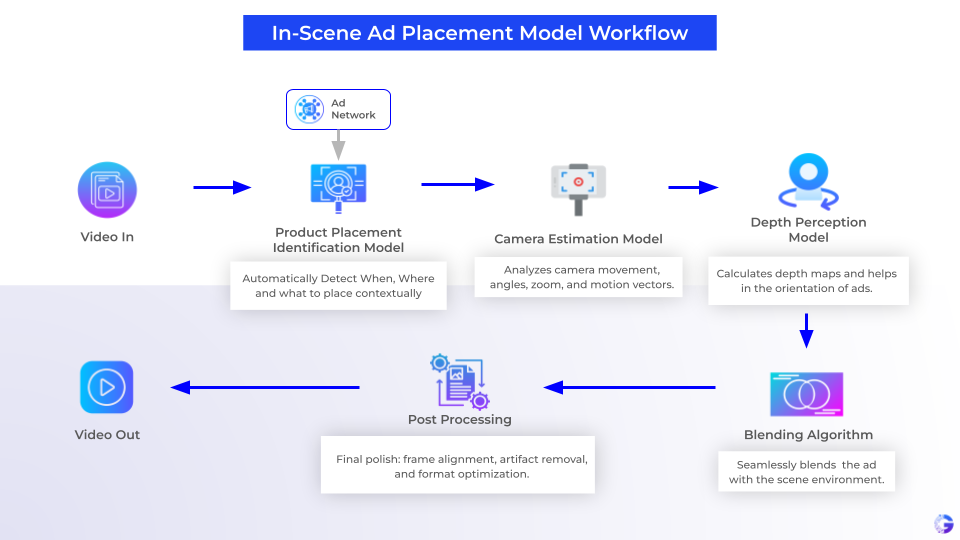

What’s Actually Happening Under the Hood?

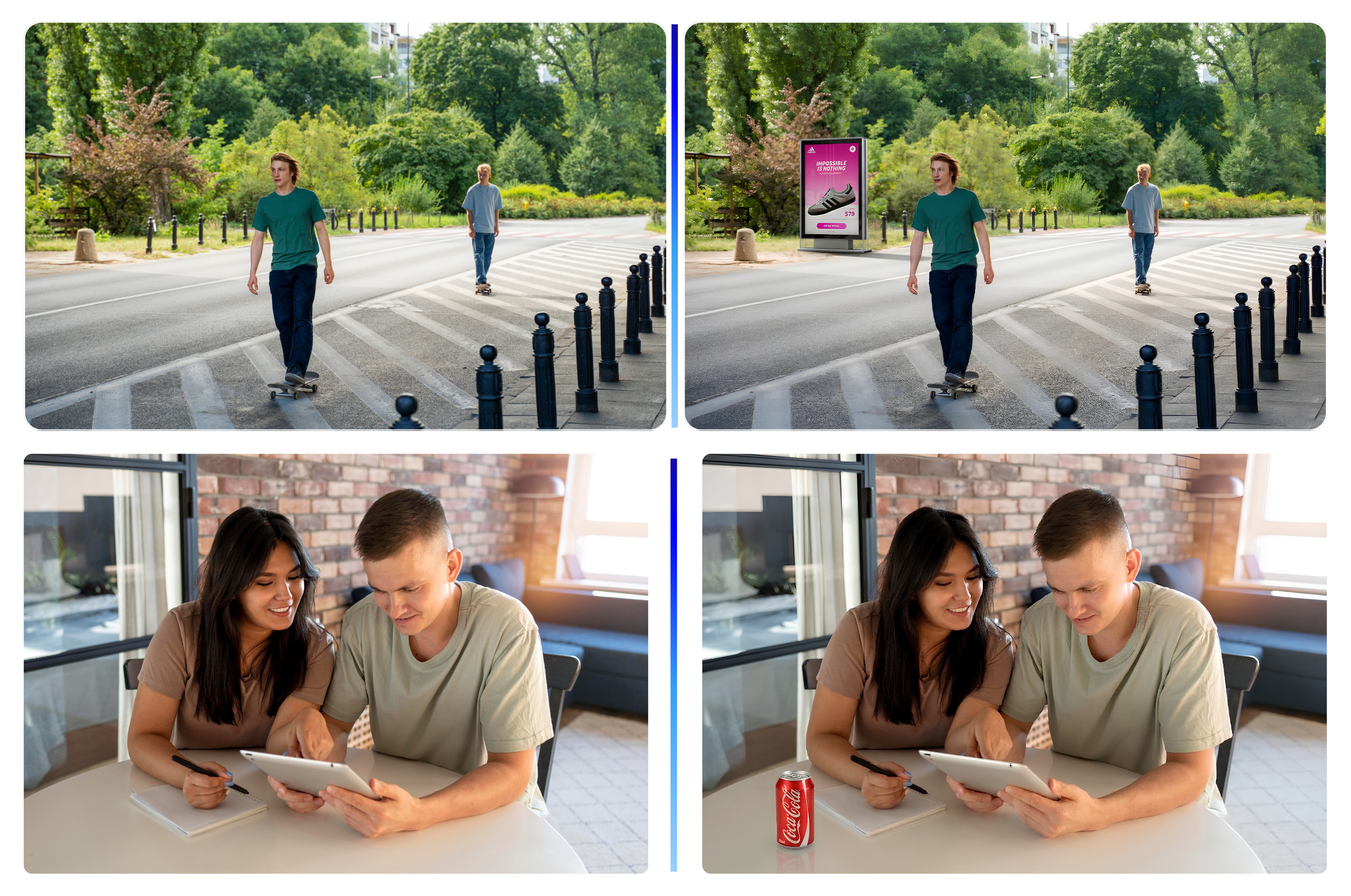

It’s not a simple overlay. Gyrus AI’s virtual product placement uses computer vision to:

- Identify surfaces, objects, and spatial planes within a scene that are contextually appropriate for brand insertion.

- Track camera motion frame-by-frame so the placed object moves naturally with the scene.

- Match lighting, shadow, and color temperature so the placement looks native – not pasted.

- Render 2D and 3D objects that hold their geometry as the camera angle shifts.

- Support dynamic localization – the same scene can show different brands in different markets.

How Virtual Product Placement Works – Technical Flow?

Why Content Owners Should Care (Not Just Advertisers)?

Existing catalogue content becomes a revenue asset, not just an archive. A library of 10,000 episodes can be retroactively monetized with contextual brand placements. No reshooting. No production disruption. New revenue from content that’s already paid for.

The Market Is Already Moving Here.

- Amazon Prime Video has adopted virtual placements at scale in shows like Reacher and Jack Ryan.

- NBCUniversal’s Peacock launched programmatic VPP tools, monetizing back-catalogue content like The Office.

- Research shows up to a 35% increase in purchase intent when VPP is used alongside traditional advertising.

- 75% of consumers have searched for a product after seeing it in a TV show or film – proving that in-content exposure actually drives action.

What Powers All of This.

Both products share a common technical foundation – which is why they work at scale:

Multi-Modal AI (text + image + audio) | Knowledge Graph Architecture | Domain-Specific Training | On-Premise Deployment | REST / GraphQL API | AWS + GCP Compatible | GDPR-Ready

One integration, two products. Whether you’re connecting to an existing MAM system or building a new ad insertion pipeline, Gyrus AI embeds via API without requiring a platform overhaul. Works with S3, GCP buckets, NAS, and existing local archives.

See It Live at NAB 2026.

Visit us at Booth W2300K . AI Innovation Pavilion – April 19-22, LVCC. Live demos of both semantic media search and virtual product placement running on real media libraries.

The NAB Floor Is Full of Future.

Come See Ours.

There’s no shortage of AI at NAB 2026. What’s rarer is AI that solves a specific operational problem without requiring a six-month integration project.

Semantic media search and virtual product placement are both live, production-deployed, and ready to show. Not a roadmap. Not a concept. Working software, on real libraries, at real scale.

If your team is still hunting for clips manually, or still interrupting viewers with ads they skip – those are solvable problems. Come find us at W2300K and let’s talk about what that looks like for your workflow.