Las Vegas never really sleeps, and neither does the media industry. The 2026 NAB Show at the Las Vegas Convention Center was proof of that – four packed days, over 58,000 registered attendees from 146 countries, more than 1,100 exhibitors, and an energy that felt different from years past. Not louder, just more certain.

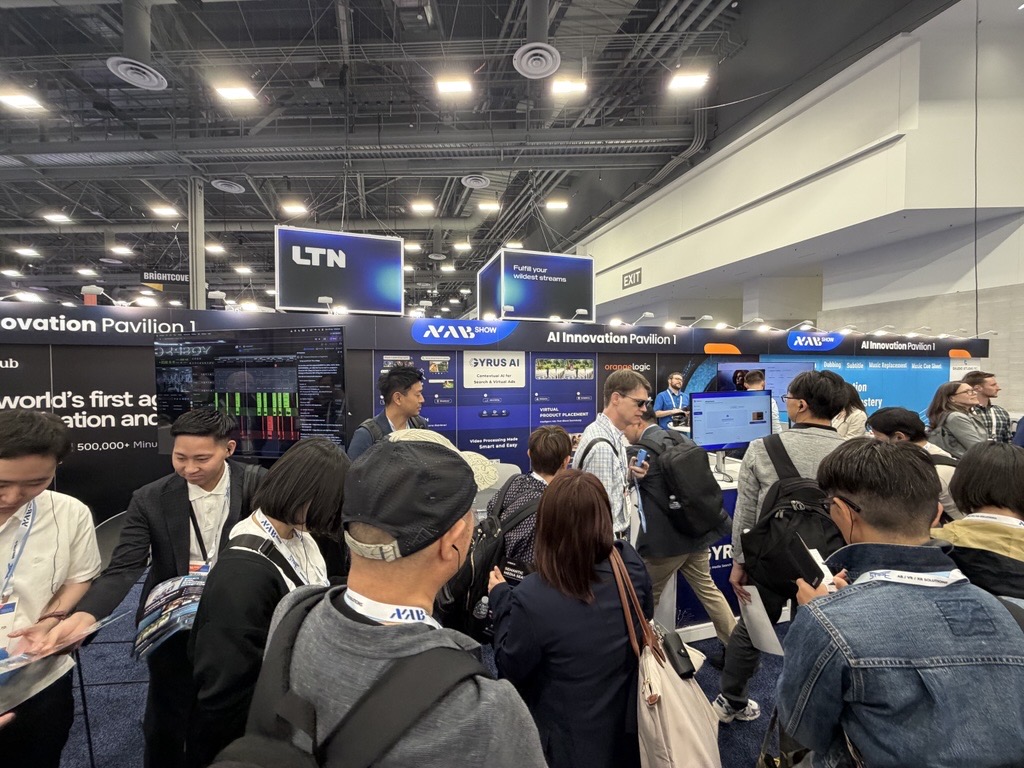

We were there as Gyrus AI, in booth W2300K at the AI Innovation Pavilion, and it was one of the most rewarding experiences we’ve had as a team.

The Show Felt Different This Year:

If you’ve been to NAB in recent years, you’ve probably noticed the gradual shift. A few years ago, AI conversations at the show were mostly hypothetical – “what could this do,” “where is this headed,” “is this really ready?” This year, those questions were largely gone.

As NAB themselves put it ahead of the show, the global media and entertainment industry is no longer in an experimental phase – it’s in an execution phase. AI has moved into core workflows. The conversations on the floor weren’t about whether to adopt AI; they were about how fast and how well.

Two dedicated AI Pavilions anchored this shift. Being in the AI Innovation Pavilion meant we were right in the middle of where the real conversations were happening, and there were a lot of them.

What People Were Actually Asking About?

Here’s something we noticed quickly: the visitors coming to our booth were sharp. These weren’t people casually browsing. They were heads of technology, content operations leaders, product executives, and broadcast engineers who had done their homework and came with specific questions.

Both of our products generated valuable conversations – Semantic Media Search and Virtual Product Placement, and the interest was consistent throughout all four days.

With Semantic Media Search, the questions weren’t about what the product does at a surface level. People got that quickly. The deeper conversations were about scale. How does it handle petabytes of video data across hundreds of thousands of hours? How does it understand context, not just keywords?

That last question is exactly the kind of thing contextual AI is built for and it’s where we spent a lot of time explaining the difference between AI that pattern-matches and AI that actually understands meaning.

For media companies sitting on vast archives of content, this distinction is everything. Finding the right clip in a 50,000-hour library in seconds, based on what’s happening in the video, not just a text tag someone added years ago. That’s not a nice-to-have anymore. It’s a competitive advantage.

Virtual Product Placement attracted a different kind of conversation, but equally energized. Broadcasters, streaming platform executives, and ad tech folks were genuinely curious about the workflow.

Conversations quickly moved into how contextual placement works – deciding when, where, and what products to place within a scene. There was also interest in how the system identifies natural moments for ad breaks, interactive ads, and how brands can be integrated through both 2D and 3D placements within existing content.

A number of discussions went well beyond a demo and into specifics around integration and pilot possibilities, especially around fitting this seamlessly into existing monetization pipelines.

The Contextual AI Conversation – This Is the Moment.

If there’s one theme we’d take away from the entire show, it’s this: contextual AI is no longer ahead of its time. It’s right on time.

The media industry has been talking about personalization, relevance, and intelligent content for years. What’s changed is that the infrastructure has caught up with the ambition. The models are smarter, the costs have dropped dramatically, and the use cases are proven.

Speaking of costs, this came up more than we expected, and it’s a conversation worth having openly. A year or two ago, one of the real hesitations around deploying AI at scale was the cost of running these models.

Today, those conversations are becoming more practical. Teams are looking closely at deployment flexibility – whether workloads run in the cloud, on-premise, or closer to the edge depending on their infrastructure and security requirements. There’s also growing interest in leveraging open-source models, which gives organizations more control over costs and customization.

The focus is shifting from whether AI is affordable to how to architect it in a way that makes economic and operational sense at scale.

Token costs – essentially the “per-use” cost of querying an AI model were high enough that doing it across millions of assets or interactions felt expensive to justify. That math has changed. LLM pricing has dropped roughly 80% from 2025 to 2026 across the industry. What used to cost $30 per million tokens at a GPT-4 level of performance now costs under $1. That shift fundamentally changes the ROI conversation for media companies considering AI at scale.

When you can run intelligent, contextual search or automated content analysis across an entire library – affordably, at speed, the decision becomes much easier. We saw this reflected in how visitors engaged with us. There was less “this is interesting but expensive” and more “okay, how do we start?”

A Quick Word on the Show Itself.

NAB 2026 brought together global brands like Sony, Canon, Adobe, AWS, Google Cloud, and hundreds of others across a show floor that spanned nearly eight football fields. With over 530 conference sessions and around 900 speakers, there was no shortage of learning and debate.

The overall mood felt optimistic but practical. This wasn’t a show full of vague promises. People came with real problems and were looking for real solutions.

A few broader trends stood out across many conversations:

- AI moving from experimentation to deployment in real production workflows.

- Creator-to-CEO Shift: The creator economy has matured into a professional enterprise segment, demanding high-end tools for IP management and monetization.

- DTC Sports Innovation: Leagues are bypassing traditional gates to act as their own media houses, using real-time data for personalized fan experiences.

- Frictionless Cloud Production: The industry is moving toward fully unified “camera-to-cloud” workflows that eliminate silos between capture and final delivery.

- Ad-Tech Integration: New monetization models are prioritizing contextual, non-disruptive advertising that blends seamlessly into the viewing experience.

Virtual Production for All: Real-time rendering and virtual sets have become affordable enough for mid-tier creators and enterprise-level corporate media.

What We’re Taking Forward?

Coming back from a show like NAB, it’s easy to get caught up in the energy of it all. But beyond the conversations and the connections, a few things stand out as genuinely meaningful for us as a company:

The market for contextual AI in media is real, it’s growing, and it’s ready. The shift from “experimental” to “operational” is happening faster than many expected and the companies that move now will be the ones setting the standard for the next few years.

We came to NAB Show 2026 to share what we’ve built. We left with a clearer sense than ever that what we’re building is exactly what this industry needs, right now.

If you were at the show and stopped by booth W2300K – thank you. And if you didn’t get a chance to connect, we’d love to continue the conversation.

Gyrus AI builds contextual AI products for the media and entertainment industry. Our flagship products: Semantic Media Search and Virtual Product Placement are designed to help media companies unlock the value of their content at scale.